Introduction

The first revolutionizing development in clinical research data collection and management was the use of structured paper data collection forms to facilitate consistent data collection across multiple clinical sites. Use of computers to organize, process, and store data was the second. Web-based Electronic Data Capture (EDC) was arguably the third. The progression to web-based EDC has taken 40 years. Multiple reasons have been articulated for the slow evolutionary development of data collection and management methods. However, neither the history of this important evolution in clinical research nor the reasons for the apparent protracted adoption have been systematically synthesized and reported.

Today, we stand at the cusp of the adoption of direct data acquisition from site EHRs in multicenter, prospective, longitudinal clinical studies. This new data acquisition option has been variously referred to as EHR eSource, EHR2EDC, and EHR-to-eCRF data collection. Data collection such as this requires the ability to identify study data in EHRs, request the data from the EHRs, reformat the data for the study, and transfer the data into the study database.1 The interoperability with clinical site EHRs required for direct data collection from EHRs is likely the next major advance in clinical research data collection and management. While varying workflows and data flows are being pursued, EHR-to-eCRF and EDC adoption are similar in that both involve significant technology, process, and behavior changes at clinical sites and study sponsors alike. Learning from EDC adoption experience will help EHR-to-eCRF adoption proceed with less risk and return value more quickly.

Background

Although more broad in literal meaning, the label Electronic Data Capture within the therapeutic development industry has historically referred to manual key entry, automated discrepancy identification, and manual discrepancy resolution. EDC enables these functions at geographically distributed sites using web-based software (i.e., an EDC system). The fundamental shift enabled with web-based EDC was decentralized entry and centralized organization of data processing, updating, and storage. Core functionality available in most web-based EDC systems today includes the ability for a data manager to (1) design and maintain screens for data entry via the internet; (2) add and maintain univariate and complex multivariate rules to check for discrepant data; (3) develop rules for alerts and conditional form behavior such as adding fields or forms based on user entered data; (4) import and export data; (5) store and retrieve data; (6) implement and maintain role-based privileges; and (7) track and report status of data entry and processing. Some systems have additional functionality supporting randomization of study participants, assigning controlled terminology to data, and collection of data through patient-completed questionnaires. Support for specialized data collection and management have also been reported and include centralized image interpretation, classification of clinical events, management of serious adverse events in studies, and management of source data when special requirements2,3 are met.

Since EDC has largely replaced collection of structured data on paper forms, it is no surprise that EDC led the ranked-list of implemented data systems in the recent eClinical Landscape Survey in which all of the 257 eligible respondents reported use of web-based Electronic Data Capture.4 Responding companies reported managing 77.5% of their data volume in EDC systems.4 The most common types of data managed in EDC systems included eCRF data (100%), local lab data (59.5%), and Quality of Life data (59.5%). Companies also reported use of EDC systems to process Patient Reported Outcomes (ePRO) data (34.2%), Pharmacokinetic data (33.9%), and Biomarker data (28%).4

Methods

The systematic literature review supporting the revision of the Good Clinical Data Management Practices (GCDMP) EDC Chapters identified many articles of historical importance but of limited value to inform present EDC practices. However, the lessons learned from the adoption of web-based EDC may be helpful in the design, implementation, and evaluation of other technological innovations involving data collection and processing in clinical studies. Toward this objective, literature identified in the reviews supporting the recent revision of the three GCDMP EDC chapters was leveraged for the historical review reported here. The literature search criteria used and described in the recent GCDMP EDC chapter revision was executed on the following databases: PubMed (777 results), CINAHL (230 results), EMBASE (257 results), Science Citation Index/Web of Science (393 results), and the Association for Computing Machinery (ACM) Guide to the Computing Literature (115 results). A total of 1772 works were identified through the searches, which concluded on February 8, 2017. Search results were consolidated to obtain a list of 1368 distinct articles. The searches were not restricted based on the date of the work or publication. Two reviewers from the GCDMP EDC writing group screened each abstract to identify articles written in the English language and describe EDC implementation or use in clinical research. Disagreements regarding whether an abstract met these criteria were adjudicated by the GCDMP EDC writing group. Forty-nine abstracts meeting inclusion criteria were selected for full text review. The selected works (mostly journal articles) yielded 109 additional sources. While still mostly journal articles, the works identified through references in the initial batch consisted of a higher proportion of works from trade publications than did the initial batch. Eighty-five works were identified as relevant to EDC. The Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) diagram for the review is published in each of the three EDC GCDMP Chapters.

Three new articles were identified in the course of the work reported here, and one previously identified but heretofore irretrievable work was obtained for a total of 89 included sources in this review. The full text of each of the 158 identified works was read by a single reviewer to identify events, descriptions, or research results relevant to the history of EDC adoption, including rationales for and barriers to adoption of EDC for the work reported here. EDC benefits were identified and categorized according to the mechanism by which each added value to design, conduct, and reporting of clinical studies. Challenges encountered in EDC implementation were identified and partitioned into those that have largely been overcome versus those remaining today. The latter were enumerated as gaps in the use of EDC today. Information about evaluation design, implementation context, and outcome measures were abstracted from works reporting quantitative evaluations of EDC. Quantitative evaluations were categorized according to the research design, controls, and comparators employed in the evaluation of EDC. Metrics and outcome measures used in the quantitative evaluations were enumerated and classified by the construct assessed. Heterogeneity in reported evaluation methods and outcome measures precluded quantitative meta-analysis of the studies identified through the literature search. Lastly, similarities between EDC and emerging technologies, with particular emphasis on acquisition of data directly from site EHRs, were identified. The synthesis was reviewed by two reviewers working in clinical research informatics for the duration of the EDC adoption curve to identify and correct significant omissions and unsupported assertions.

Results

Remote Data Entry (RDE) as the Predecessor to Web-based EDC

The earliest use of computers in research at clinical sites likely occurred in 1963.5 The earliest reports of data collection via computers at clinical sites described locally developed systems.6 An example early implementation is described in “Development of a Computerized Cancer Data Management System at the Mayo Clinic.7

The foundations of distributed data entry in multicenter clinical studies originated before the advent of the internet. Starting in the early 1970s, sporadic reports of development and implementation of computer systems for Remote Data Entry (RDE) at clinical sites appeared in the literature. The earliest reported use of remote data entry in a multicenter clinical study was in the National Institutes of Health (NIH)-sponsored Kidney Transplant Histocompatibility Study in 1973, in which two of the twenty-five sites piloted data entry via remote terminals connected by telephone lines to a mainframe at the data center.8,9

In the mid-1980s RDE use expanded with availability of microprocessors.10,11,12,13,14,15 RDE use in multicenter studies was a sponsor or coordinating center driven process in which sites were provisioned with computers, data entry software, and a data transmission device such as floppy discs or a modem. Early RDE implementations distributed database management to the clinical sites with varying levels of responsibilities.16 Some implementations required sites to first complete paper CRFs and afterward perform first data entry with limited edit checks. Other implementations included centrally performed second entry or additional centrally run edit checks. While others relied upon single entry, with sites having varying amounts of local edit checking during or shortly after entry, and differing extents of batch or central batch edit checks afterwards.8,9 Early registries from the 1970s through the 1990s similarly employed systems where data were entered at clinical sites on thick- or full clients, i.e., software applications where most or part of the program was stored on the user’s computer with subsequent transfer of data to a central database.

Early commercial systems based on a distributed model (transferring data by media or modem) started appearing in the 1990s.9 The earliest of these commercial systems required distribution of laptop computers to investigational sites. These off-line systems were vulnerable to local data loss and, due to the distributed nature, were more difficult to secure. The expense of hardware distribution to sites, especially in multiple countries, was cost prohibitive and logistically challenging. With few exceptions, RDE largely failed to show significant improvement over traditional paper-based data collection and central processing.17 Clinical data capture was not listed among major improvements in clinical trial procedures and implementation realized during the 1980s.18

Collen (1990),6 Lampe and Weiler (1998),19 Hyde (1998),20 Kubick (1998),21 and Prove (2000)22 provide more complete historical descriptions of RDE. Reports of RDE were published up to the turn of the century11,23,24,25,26,27,28 when RDE ultimately gave-way to web-based approaches.

Web-based EDC

Three main advances in Internet technology made web-based EDC as we know it today possible: (1) internet enabled connectivity of geographically distributed sites, (2) executable code embedded within web pages, and (3) central database storage with decentralized retrieval of information via the internet.29 Early use of web-based forms to collect data over the internet first started appearing in 1995 and continued increasing in frequency to the turn of the century.29,30,31,32,33,34,35,36,37,38 Several provided descriptions of early system architecture and design.29,34,36,39,29,41

The first large web-based trial was initiated in 1997.38 A fifteen-country rare disease trial reported in 199929 was likely initiated in the same time frame. The first surgical web-based trial was reported in 2002.42 These early web-based EDC attempts used locally developed web-based systems. During this time period, early speculation and sporadic reports of cost and time savings associated with web-based EDC piqued the interest of the therapeutic development industry and the number of commercially available systems grew. Industry-sponsored studies primarily used commercially available systems. However, reports of locally developed web-based systems with varying combinations of functionality continued to be reported at academic centers through 2010.42,43

Even though 86% of respondents in a 2002 survey of large pharmaceutical companies agreed that “new technologies would have great impact in the near future,” and 56% agreed that there was “already a high level of urgency within their organizations to adopt new technologies,” adoption of web-based EDC remained low at the start of the new millenium.44 At the time, it was widely recognized that “reliance on traditional paper-based processes” in clinical trial data collection commonly incurred a three to four month delay before information became available.45 Others reported longer lag times in data availability when comparing use of EDC electronic Case Report Forms (eCRFs) to using traditional paper-based data collection.46,47,48 In the same year, an Association of Clinical Research Professionals (ACRP) survey of 2,300 respondents found that 66%, 60%, and 50% of sites, CROs, and Sponsors, respectively, expected to adopt eCRFs within two years.44

EDC adoption progressed more slowly than the early surveys predicted. Reported reasons included organizational lack of strategic planning, the customized requirements of each trial, the immaturity and fragmentation of commercially available EDC software, lack of scalability from pilots to enterprise adoption, and lack of addressing both process and organizational change.44,45,49 The lack of process change needed to implement EDC has been attributed to lack of leveraging of the technology to re-engineer clinical study processes, lack of organizational strategy and planning to move from pilots to enterprise adoption, and inability to measure success.45 Further, failure to change historic delineations of job roles and responsibilities has been cited as a significant factor inhibiting process re-engineering.49 Additionally inhibiting the introduction of new technologies was the potential of new technology to create unanticipated bottlenecks in processes. For example, new systems may be larger and more complex, increasing the need for training or the need for specialists.50 More complex systems result in fewer team members understanding the workflow, data flow, or other operations of the system, increasing the potential for mistakes and longer times to troubleshoot problems. These situations would, in turn, require more specialists or increase the number of individuals needed to configure, test, implement, troubleshoot, and maintain systems.50 EDC study start-up required more up-front work and data management staff.51 Lack of leadership mandate or support and lack of alignment throughout the organization have also been faulted for slower-than-projected adoption, as well as lack of delighting stakeholders (sites) with an improved trial experience.49

By year 2001, only 5% of new clinical trials reported using EDC or Remote Data Entry.49 In the same time frame, CenterWatch reported that only 16% of sites were required to use EDC (probably inclusive of RDE).52 In the concurrent literature others also lumped both RDE and web-based EDC under the label “EDC”.9,17,45 By year 2004, however, 70% of sites reported using an EDC system.52 El Emam et al. (2009) estimated that by the 2006–2007 time frame, 41% of Canadian trials were using EDC.53 Varied EDC system designs and architectures were reported during this time. Examples of these include both client-server-based systems on laptops,54 and wholly internet-based approaches developed for single studies,55,56,57 as well as use of commercial web-based EDC platforms.41,58,59,60

Commercial EDC platforms were more heavily used by industry than academia. Disproportionately fewer studies were published by industry authors, however, because information about the methods and technology industry utilized were often considered proprietary. Thus, the earliest reports were predominantly from academic institutions and not reflective of industry practices. Broad adoption of web-based EDC at academic centers lagged in favor of basic general-purpose or office applications such as spreadsheets and inexpensive pseudo-relational databases.61 Delineated reasons for slower adoption in academia primarily stemmed from lack of motivation or resources to scale tools built for single studies, high cost of early commercial solutions compared to low-budget small investigator-initiated studies, and lack of institutional support to defray costs to academic investigators.62,63 Later, free solutions such as REDCap® filled the gap in academia by providing free publicly available software meeting the needs of academic researchers.64 Multiple such in-house solutions were developed and used at academic institutions. Today, the REDCap® system is nearly ubiquitously adopted at clinically-oriented academic institutions in the United States (and likely abroad) for EDC. An important difference between commercial and academic EDC platform usage is that although the technical controls requisite in Title 21 CFR Part 11 are often present in software developed and implemented at academic institutions, in our experience, they are not routinely locally validated to Part 11 standards. In contrast, commercial EDC platforms serving the therapeutic development industry meet these regulatory requirements for validation.

Perceived Benefits of EDC

Benefits of web-based EDC Implementation such as cost savings, more timely availability of data, increased accuracy, and fewer queries (Tables 1 and 2) have been widely touted.29,65,66,67 These and other benefits stem from the internet providing both (1) rapid and continuous central data acquisition from geographically distributed sites and (2) immediate and decentralized data use. Internet provided connectivity allowed common information system benefits such as shared data use, decision support, automation, and knowledge generation across geographically distributed sites and teams in multicenter studies. Thus, the value of EDC to an organization increases as more data, especially data traditionally managed in separate systems, become available via EDC systems.65,68 Echoing what process engineers have been saying for the past several decades, although web-based EDC implementations will improve most data collection scenarios, their real benefit arises when processes are re-designed to take advantage of having real-time information simultaneously available to all research team members.37

Reported Benefits of EDC Over Paper and RDE Data Collection in Study Start-up.

| Benefits During Study Start-up | Mechanism |

|---|---|

| Elimination of paper Case Report Form (CRF) printing and physical distribution38,42,51,70 | A |

| Elimination of filing, storage, and retrieval tasks associated with paper CRFs and study documentation38,66,71,72 | A, P |

| Making use of sites’ existing “office grade” computers.29,38 | C, E |

| Making use of sites’ existing “office grade” web-browser software in a platform independent manner.29,38,42,73,74 | C |

| Elimination of time needed and error associated with manual generation of an annotated CRF through maintaining association of form fields with logical database storage location as a natural by-product of the database set-up.75 | A |

| Elimination of printing and physical distribution of study-related information, training materials, and job aids.38,41,42,66,73 | A |

| Use of data from previous studies to identify high performing sites or inform planning with regards to volume, task time, and elapsed time expectations.76 | KG, DS |

A: Automation; C: Connectivity; DS: Decision Support; E: Making use of less expensive or pre-existing equipment; KG: Knowledge Generation from data such as from data mining; P: Physical space savings; R: Relocation of work tasks to more efficient, better skilled, or less expensive individuals.

Reported Benefits of EDC Over Paper and RDE Data Collection in Study Conduct.

| Benefits During Study Conduct | Mechanism |

|---|---|

| Online Treatment Allocation (Randomization)38,51 and associated “Improvements in data validation at the input stage ensure that fewer invalid patients are initially signed up for the trial”45 | A |

| Use of an information system instead of or to enforce manual processes: | |

|

A |

| R | |

|

A |

|

A |

| Relocation of data entry to clinical sites: | |

|

A, R |

| A | |

| R | |

| R | |

|

|

| Automation of discrepancy identification and relocation of discrepancy resolution to sites: | |

| A, R | |

| R | |

|

A |

| A, R | |

|

R |

| Centralization of information in a web-based system rather than on a site computer, as was the case with RDE, enables more comprehensive security and backup.43,73 | E, A |

| Increasing information system and data access for clinical trial monitors | |

|

C, DS |

| R, A | |

| Workflow assistance for (1) monitor queries resulting from Source Document Verification (SDV) and (2) documentation of SDV in the EDC system may make SDV more efficient or enable capture of useful data not previously available, such as the error rate detected through SDV.75 | A |

| Replacing batch processing with the continuous flow of data17 while making information available to users around the globe facilitates control the study at all levels.43,45,66,80 | |

| A, DS | |

|

C, DS |

|

R, A |

| A A, C, DS |

|

| Overcomes many challenges of geographical dispersion in data collection and cleaning.29 | C |

A: Automation; C: Connectivity; DS: Decision Support; E: Making use of less expensive or pre-existing equipment; KG: Knowledge Generation from data such as from data mining; P: Physical space savings; R: Relocation of work tasks to more efficient, better, or less expensive skilled individuals

Reported benefits of EDC are classified here according to mechanisms through which health information technology creates value.69 In addition to the categorization from this review (Tables 1 and 2), early advantages and disadvantages of EDC have been particularly well articulated by Marks et al.38

Barriers to and Unmet Potential of EDC

Barriers to use of web-based EDC that surfaced during the early phases of EDC adoption have largely been overcome. These included problems with hardware and internet provision, internet connectivity, as well as new roles and large learning curves for data management, monitoring, and site personnel. Other barriers to early EDC adoption that have now been largely overcome include management of system access and privileges, provision of technical support for site personnel, and privacy and confidentiality concerns due to transmission of patient data over the internet.37,41,49,50 The latter necessitated new technology and methods to secure systems open to or operating over the internet3,73; vulnerability to local bandwidth and network traffic being outside the control of the study team; increased dependence on informatics and IT expertise and institutional processes, for approval to install software that abided by local-client requirements or allowed institutional data to be housed in Sponsor’s web-based systems.46,73 Early adopters identified inconsistency between institutional policies at sites for retention of paper copies and EDC-based electronic research documentation retention guidelines.46 Early lack of enthusiasm of site personnel for performing data entry, perceived as “clerical”,9 was largely overcome as site personnel perceived reduction in query-related effort and other benefits related to EDC implementation.

Most early barriers to EDC have been reduced or overcome through improvements in technology, process, and implementation or through evolution of trial personnel perceptions and acceptance over time. For example, consensus has been reached on interpretation and implementation of Title 21 CFR Part 11.49 Today the regulation is understood as a requirement and organizations have procedures in place to ensure compliance. Though largely overcome today, the cumulative impact of these challenges has hampered, obstructed, and slowed implementation and adoption of EDC.65

Today, despite all the progress made, EDC has still not reached full potential. For example, EDC was supposed to eliminate recording data on paper study forms at clinical sites. Early reports documented that using a paper form as an intermediate step between the source and the EDC system “doubled the data entry workload at the sites and also increased the monitor’s workload”.37 However, use of paper forms at sites in this way has perpetuated at some sites59,67,78,81,82 indicating that further process improvements or better integration into site-specific workflow and data flow are needed. Early EDC adoption was hindered by transiency of internet resources (e.g., controlled terminologies), web based knowledge sources, and interfaces used for web-based information exchange.73 Transiency was a particularly difficult problem given increased desire to receive and use data from one system to another, which subsequently intensified need for system interoperability, technology reliability, and standards for data exchange. Though in some cases the transiency has been overcome, significant interoperability challenges remain.

Early Site-user Evaluations of EDC

Early EDC adopters were concerned about site acceptance of new technology and process changes, in particular the aforementioned relocation of data entry to clinical sites.9 Three site investigator surveys were reported following EDC pilots. Dimenas (2001) reported that 77% of investigators and 74% of monitors “found the workload to be reasonable”.37 Similarly, 71% of 107 participants at the final investigators’ meeting indicated that the web-based EDC system offered definite advantage over other alternatives.37 Litchfield et al. (2005) reported equivocal results with 57% of investigators indicating that setting up the EDC study sites took either a little more or much more time than past, non-EDC paper studies.47 Half of the respondents reported that monitoring visits took more time, with only 28% reporting monitoring visits taking less time than in paper studies.47 Similarly, Litchfield et al. go on to report that 42% perceived CRF completion in the EDC study as easier than their experience on past paper studies, while 28% perceived it as more difficult.47 Half of the responding site investigators in this survey reported the perception that the number of queries was slightly fewer and their handling easier with EDC, while 7% perceived the number of queries to be much fewer.47 Thirty-six percent of the responding site investigators in the Litchfield survey perceived the handling of queries to be more difficult with EDC than their experience on past paper studies.47 Investigators perceived a worsening in increased time (1) to set up the study (58%) and (2) to complete the CRF (50%.)47 However, in the same survey, 36% of the internet sites thought use of EDC reduced the time and costs associated with the trial overall.47 Eight of the 14 centers (57%) perceived that use of EDC for clinical trial data recording was better or much better than conventional systems, though 28% still thought it was worse or much worse.47 A majority (71%) of the responding site investigators indicated they would prefer to use EDC over paper CRFs for future studies.47 In a separate, brief site investigator survey conducted in 2005 following a pilot EDC study, respondents reported data quality (67%), data entry (78%), and workload (59%) to be an improvement over paper.66 Overall, site investigators in the reported survey studies responded favorably to EDC.

Quantitative Evaluations of EDC

Today EDC is largely accepted as having cost, time, and quality advantages over data collection on paper.33,38,46,48,57,58,65,66,72,73,76,78,83,84 While early direct comparisons between EDC and paper were quite favorable toward EDC in terms of reduction of query volume and data collection time, only a few were published. Mitchel (2006) lamented that while it was clear that there were theoretical advantages of internet-based trials over traditional paper-based clinical trials, there was a paucity of evidence and that data were needed regarding the efficiencies of data entry, trial monitoring, and data review.72 Twelve studies reporting quantitative evaluation of EDC were identified in the literature search.

Of the twelve identified EDC evaluations (Table 3), only Litchfield et al. (2005) employed a randomized, controlled design.47 Ten of the remaining eleven studies were observational. Six of the observational studies employed a comparator such as data double or single entered centrally from paper forms on the same study or on a comparable but different study.48,58,70,85,86,87 Four other of the observational EDC evaluations reported operational metrics including cycle times, number of data changes, and discrepancy rates from source-to-EDC audits from actual studies but with no comparator.37,59,72,81 The one remaining report made comparisons between EDC and paper data collection, but did not describe the methods in sufficient detail to classify the research design.45 Surprisingly, given the desire to retain the best clinical sites and sensitivity to site burden in clinical research,88 no formal workflow or usability studies of EDC technology were found among published EDC evaluations.

Quantitative Studies Evaluating EDC.

| Chronological List of Identified Studies Quantitatively Evaluating EDC |

Randomized Controlled Evaluation | Observational With A Comparator | Observational Without A Comparator |

|---|---|---|---|

| Banik and Mochow, 8th Annual European Workshop on Clinical Data Management, 199858 | X | ||

| Green, Innovations in Clinical Trials, 200348 | X | ||

| Mitchel et al., Applied Clinical Trials, 200185 | X | ||

| Dimenas et al., Drug Information Journal, 200137 | X | ||

| Spink, IBM Technical Report, 2002*,45 | |||

| Mitchel et al., Applied Clinical Trials, 200370 | X | ||

| Litchfield et al., Clinical Trials, 200547 | X | ||

| Meadows, Univ. of Maryland at Baltimore Dissertation, 200686 | X | ||

| Mitchel et al., Applied Clinical Trials, 200672 | X | ||

| Nahm et al., PLoS One, 200859 | X | ||

| Mitchel et al., Drug Information Journal, 201181 | X | ||

| Pawellek et al., European Journal of Clinical Nutrition, 201287 | X | ||

Randomized Controlled Evaluation: a research design where the experimental units (in this case data) are randomly assigned to an intervention (in this case EDC) versus some other data processing method as a control. Observational With A Comparator: a research design where there is no prospectively assigned intervention and no control, but data were collected for EDC and some other data processing method to indicate if one or the other is associated with a better outcome. Observational Without A Comparator: a research design where operational metrics were collected and reported for EDC use but where the same or similar metrics were not also observed for a different data processing method; studies using this design are commonly referred to as descriptive studies. * There is insufficient detail in the Spink (2002) IBM Technical Report to classify the research design.

Due to the small number of evaluations documented in the published literature, we do not know whether the results from the evaluations are representative of most EDC evaluations. It is likely, based on the identified studies, that most were observational in design and used existing organizational, operational metrics similar to those listed in Table 4 as the basis of their appraisal. Cost, quality, and time metrics were variously used in the evaluations (Table 4). Only one evaluation reported metrics in all three categories. The cost, quality, and time outcome measures are summarized to inform design of future evaluation of data collection and management technology. Since the observed operational metrics varied between studies and were not well specified, further synthesis is dubious.

Metrics Used to Evaluate EDC.

| Metrics | DQ | Time | Cost |

|---|---|---|---|

| Query rate (number of queries per subject, form, page, or variable)37,45,47,48,86,87,89 | X | X | |

| Query rate at the time of data entry (number of queries per subject, form, page, or variable)85 | X | X | |

| Number of queries generated by the monitoring group85 | X | X | |

| Percentage of queries according to subgroups: | X | ||

| Percentage of discrepancies resolved86 | X | ||

| Percentage of queries requiring clarification45 | X | X | |

| Number of (subjects, forms, pages, data elements, data values) requiring a modification72 | X | X | |

| Percentage of data (subjects, forms, pages, data elements, data values) requiring a modification45 | X | X | |

| Change in measures of data element central tendency, before and after cleaning81 | X | ||

| Change in measures of data element dispersion, before and after cleaning81 | X | ||

| Error rate (number of values in error/number of values assessed)59,70 | X | ||

| Percentage of invalid enrolled subjects45 | X | X | |

| Number of days between patient visit and data entry37,47 | X | ||

| Number of days from data entry to query resolution37 | X | ||

| Number of days from query generation to query answered37 | X | ||

| Number of days from query answered to query resolved37 | X | ||

| Number of days from query generation to resolution37,47 | X | ||

| Number of days between data entry and final modification72 | X | ||

| Number of days between data entry and queries resolved37 | X | ||

| Number of days between entry and clean data72 | X | ||

| Number of days between entry and form review by the CRA in the field72 | X | ||

| Number of days between data entry and form review by the in-house data reviewers72 | X | ||

| Number of days between data entry and form review by CDM72 | X | ||

| Number of days between Patient’s last visit to Patient locked37 | X | ||

| Number of days between the First Patient First Visit (FPFV) to the date of the last data change47 | X | ||

| Trial duration48 | X | ||

| Number of days between Last Patient Last Visit (LPLV) and clean file37,47,48 | X | ||

| Cost of raising and resolving a query45 | X | ||

37 Dimenas et al. Drug Information Journal, 2001.45 Spink IBM Technical Report, 2002,47 Litchfield et al. Clinical Trials, 2005.48 Green, Innovations in Clinical Trials, 2003.59 Nahm et al., PLoS One, 2008.72 Mitchel et al., Applied Clinical Trials, 2006.81 Mitchel et al., Drug Information Journal, 2011.85 Mitchel et al., Applied Clinical Trials, 2001.86 Meadows, University of Maryland at Baltimore Dissertation, 2006.87 Pawellek et al. European Journal of Clinical Nutrition, 2012.89 Takasaki et al., Journal of Nutritional Science and Vitaminology, 2018.

Data Accuracy in EDC Evaluations

The fundamental belief that EDC improves data accuracy is widely held and reflected both in the literature and practice. Yet, in preparing this review, only four comparisons of clinical trial data accuracy between EDC and traditional paper-based data collection in clinical studies were found.59,70,81,86 All four identified studies compared data initially recorded on paper forms to the same data subsequently entered in the EDC system. Thus, all four evaluation studies measured transcription fidelity from paper forms to the EDC system.

These studies all suffer from methodological weaknesses that limit their usefulness in assessing the accuracy of data entered at clinical sites via EDC. Only two of the studies59,70 reported a discrepancy or error rate. In a third evaluation study, Staziaki et al. (2016) employed a crossover experimental design to compare EDC with data collection via key-entry into a spreadsheet.61 However, the sample size was too small to assess data accuracy. Thus, the Staziaki does not appear in Table 4. In a fourth evaluation study, Takasaki et al. (2018) reported data quality assessment for a rehabilitation nutrition study.89 They enumerated missing, out of range, and inconsistent data as detected by rule-based data quality assessment, i.e., query rules. Four errors were detected in the data entered for 797 patients with data type errors, out of range errors, input errors, and inconsistency all assessed. The objectives of the Staziaki et al. and Takasaki et al. studies were not EDC evaluation.

The paucity of evaluative results regarding data quality is striking. Multiple authors (Table 4) have reported counts and rates of data discrepancies identified through rule-based data quality assessment, also called edit checks or query rules. Data discrepancy reports such as these are often and unfortunately confused with data accuracy. Discrepancies identified by rule-based methods may serve as an indicator of data accuracy since it is reasonable to infer that data discrepancies (detected by rules) are a by-product of actual errors in the data – and vice versa. However, (1) rules, real-time or otherwise, miss conformant but inaccurate data and (2) data discrepancies identified through rules are dependent on the number of rules, the logic of the rules, and the data elements to which the rules are applied. These aspects vary from study to study and, as such, so do the number of identified discrepancies and the percentage of fields, forms, patients, etc. with data discrepancies. In other words, the number of discrepancies for two data sets of equal accuracy may vary based on the rules used. Thus, neither the number nor rate of rule-identified data discrepancies provide a reliable estimate or basis for comparison of data accuracy across studies. Rule-identified data discrepancies, while easy to measure, are only a broad indicator- but not a measure of data accuracy.

Accuracy of a data value can only be determined by comparison with the true value, which in most cases is not known. Redundancy-based methods of identifying data discrepancies, i.e., comparing the data to an independent source of the same information, are more comprehensive than rule-based methods in that they detect all divergences from the comparison values. For example, in-range but wrong data will be detected by redundancy-based methods to the extent that the redundant data are themselves accurate. As such, where good sources of comparison can be found, redundancy-based methods as proposed by Helms (2001) are better indicators of data accuracy than rule-based methods.9 Sending two samples from the same time-point, patient, and instance of sample collection (a split sample) to two independent labs is an example of a redundancy-based method; as is comparing data collected for a study back to the original recording of the information, i.e., the source, as is done in clinical trial source data verification processes.90 To the extent that redundancy-based methods cover all data elements of interest and employ an independent and more “truthy” comparator, they get closer to actually assessing accuracy than rule-based methods. Comparison to the source is still not a complete measure of accuracy because errors in the source are usually not known and not measured in the comparison. In the reports of EDC data quality assessment, only one, Nahm (2008), compared EDC data to the source; however, the source was a structured form completed during or after the patient visit and may have still contained errors itself.59

Claims that EDC increases data accuracy (Table 1) are likely based in first principles, mainly that (1) the “forced rigor” of structured fields, limited response options, and real-time checks for or prohibition of missing or inconsistent data during entry decrease errors and (2) entering data closer in space and time to the original capture allows correction where there is recollection or an original recording. While the forced rigor has been associated with lower error rates,90 these likely true claims have not been proven in a generalizable way. Further, error rates measured from data entry were an order of magnitude lower than those measured between collected data and medical record source documents from which they were abstracted. Unfortunately, the only appropriate conclusion from this review regarding evaluation of data quality in clinical studies using EDC is that little is reported in the literature about the accuracy of data captured through EDC.

Discussion

EDC Adoption

In the last decade, the therapeutic development industry has made extensive use of EDC. In 2008, the CenterWatch EDC adoption survey of investigative sites reported that 99% of sites were using EDC in at least one of their trials, with 73% of trial sites utilizing EDC for at least one-fourth of their studies.52 In the same survey, 36% of sites reported using some type of EDC including interactive voice randomization, web-based data entry, fax-based data entry, or electronic patient diaries for at least one-half of trials they conducted.52 Only 2% of responding sites, however, indicated they no longer collected any case report form data on paper.52 A decade later in the 2018 eClinical Landscape Survey, 77.5% of eligible respondents reported managing CRF data in the primary EDC system.4 Today with most traditional CRFs entered and cleaned over the internet, the primary mechanism for collection and management of CRF data is web-based EDC.

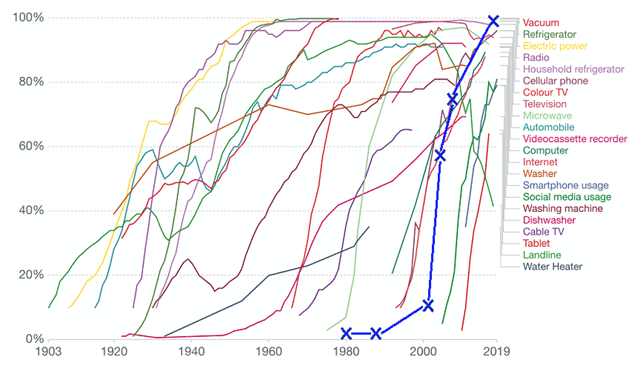

The time period from realization that clinical study data could be collected electronically at sites and immediately available to the study team until full adoption seems long. The time period from the earliest report of RDE (in 1973), until the most recent adoption reports, spans four decades. However, as seen in Figure 1, the EDC adoption curve (bold blue line) is comparable with most contemporary new technology adoption rates.91 The entry tail of the adoption curve may have been more protracted for EDC than those for most other reported technologies. However, early data before the inflection point are not provided for most other innovations on the graph and such a comparison is not possible. Given complexities involving the regulated international nature of therapeutic development, the primacy of human subject protection concerns (including protection of individual health information), the challenges of crossing organizational boundaries for EDC implementation, and the sociotechnical aspects of EDC changes wrought in workflow and information flow (for sites and sponsors alike), it would not be surprising if the approach to EDC adoption were slower than for other innovations.

Estimated EDC Adoption Curve Superimposed on US Technology Adoption Data.

Source: US Technology adoption in US Households data and image from Ritchie and Roser.92 The data sources from which the image was compiled are listed at https://ourworldindata.org/technology-adoption#licence. The image was reproduced and adapted here under the creative commons license. EDC adoption data were obtained from the studies referenced here4,52,53 and superimposed in bold blue “X’s” on the image. Point estimates were made by the authors averaging percent adoption of sites and trials where both existed at the same timepoint. Thus, the actual EDC adoption curve may lie several years or percentage points in either direction. Some regions of the world likely experienced significantly shifted curves from others.

Lingering Challenges With EDC

Fully adopted does not necessarily mean fully matured. Today, web-based EDC is a valuable member of an ecosystem containing multiple data sources and information systems supporting clinical trial operations. The functionality in web-based EDC systems has become fairly stable. However, as others67,76,78 have noted there are multiple opportunities for software vendors and organizations to move past the current state and realize significant EDC powered improvements in clinical research data collection and management.

Limitations of EDC technology itself (1) and the extent to which we exploit it (2 and 3) remain today and include:

-

Lack of interoperability

Lack of interoperability between EDC systems and the ever-increasing myriad of non-EDC data sources in clinical studies such as medical devices, ePRO systems, and site EHRs.60 These include moving beyond site-visit-based studies to support direct to consumer studies and pragmatic studies, in which some or all baseline and outcome data may come from EHRs, devices, or direct patient input.

Lack of interoperability between EDC systems and institutional infrastructure systems such as research pharmacy systems, electronic Institutional Review Board (eIRB) systems, and sponsor and site-based Clinical Trial Management Systems (CTMSs).60

Lack of processes re-design to leverage EDC technology more fully80 such as routinely requiring daily data entry, review, and feedback to decrease cycle times and error impact,17,72,93 providing automated alerts and decision support, and employing surveillance analytics that continuously monitor data to detect process anomalies.

Lack of formal error rate estimation for data collected through EDC systems.

These areas are large reservoirs of untapped potential, and at the same time remain an impediment to advancing the conduct of clinical studies.

Limitations 1 and 2

The ability to accomplish the re-engineering needed to advance the conduct of clinical studies is partially dependent on, and greatly augmented by, interoperability with other systems. Some of the earliest articles about EDC conceptualize EDC systems as a hub, and describe functionally as integrating operational and clinical study data from multiple sources.16,21,38,76,84,94 However, this comprehensive integration of infrastructure and study data sources has not come to fruition. Lack of ability to integrate data from disparate sources in EDC systems (limitation 1) was reported early in the EDC adoption curve.43,44,45,74,95,96 Multiple recent reports provide examples of custom EDC integration with other systems for individual studies after not finding available solutions.55,60,67,71,97,98 Today, integration of data from other clinical or operational systems with EDC remains largely a custom and point-to-point endeavor. Reports of integration and interoperability remain a challenge in most organizations, with 77% of companies represented in the 2018 eClinical Landscape survey reporting challenges loading data into primary EDC systems.4

Interoperability-related EDC limitations are significantly exacerbated by the increasing volume and variety of new and external data sources in clinical studies. The 2018 eClinical Landscape Survey indicated that in trials the following non-CRF data are frequently collected and managed: central lab data, local lab data, quality of life data, ePRO data, pharmacokinetic data, biomarker data, pharmacodynamic data, electronic Clinical Outcome Assessment (eCOA) data, medical images, genomic data, and mobile health data.4 The 2015 and 2018 Clinical Data Management job analysis survey corroborated the increasing use of these types of non-CRF data within clinical trials.99,100 Respondents to the 2018 eClinical Landscape Survey reported an average of 4.2 to 6.5 computer systems used in clinical trials, with more than two-thirds of respondents reported they will increase the number of data sources over the next three years.4 Others, e.g., Lu (2010),60 Howells (2006),68 Brown (2004),84 and Comulada (2018),98 report similarly high numbers of computer systems used in studies and Handelsman (2009)101 the need for integration. At the same time, additional alternate real-world data sources are aggressively being pursued.102,103,104

These non-CRF data were reported as managed in the primary EDC system by fewer than five percent of the eClnical Landscape Survey respondents.4 The increase in non-CRF data99,100 coupled with management of non-CRF data in systems other than EDC systems, indicates that EDC systems may play an increasingly smaller but still essential role in data collection and management. A shrinking role would limit EDC systems’ ability to advance study conduct, management, and oversight. Today, there are few commercial solutions for integrating all study data during the study. Lack of interoperability to accommodate these external data sources21,76 leaves organizations to integrate data by building their own clinical study data integration hubs, use general commercial tools built for other industries, or do without needed data integration during studies (and integrate data only for statistical analysis post data collection).

The cost of not having non-CRF data integration with EDC is high. Without it, organizations largely lack comprehensive information in near real-time needed to better manage and conduct studies. Mitchel et al. (2006) and Summa (2004) illustrate the importance of near real-time data collection and review to identify problems before they affect multiple subjects and to help finalize data collection and processing sooner.17,93 Wilkinson et al. (2019) report increases over the past decade in cycle times to build the study database, input post-visit data, and lock study databases as well as more variability in data handling cycle-times.4 The increased number of non-CRF data sources used in studies, in the absence of supporting data integration, is a possible contributor for the lengthening cycle times.105 Similarly, the burden on clinical investigational sites associated with data collection remains a significant concern.106 Distribution of mobile devices, training patients in their use, and manually tracking their use in studies increase site burden. The absence of supporting integration also results in boluses of after-the-fact questions when data are later integrated and reconciled. The trend of getting studies into production later, providing data later, and encountering barriers to timely reconciliation all affect trial execution. Based on the most recent survey,4 lack of data integration with EDC systems remains a significant obstacle to optimal study conduct and management.

As the adage goes, “you can’t manage what you can’t measure,” and without integrated data, we can’t measure, much less use the data to re-engineer processes. As time and cost demands on clinical studies intensify, solving the non-CRF data integration problem and gaining the ability to leverage data in near-real-time to manage studies should be one of, if not the prime target for study risk and cost reduction.

Fortunately, focused efforts toward creating standards to support interoperability within clinical research have recently intensified. Industry, federal, patient, professional, and academic organizations and the Clinical Data Interchange Standards Consortium (CDISC) have joined with Health Level Seven (HL7) to create an organization within HL7 called VULCAN (www.hl7.org/vulcan). Vulcan’s chief mandate is to accelerate development and adoption of the Fast Healthcare Interoperability Resources (FHIR®) data standards in clinical research. The FHIR® standards are now being widely adopted in healthcare in many countries, and provide a mechanism to extract data from site EHR systems. The HL7 FHIR® standards could be further developed toward use cases for broader information exchange between research and healthcare, between organizations working together to conduct studies, and for better information exchange within research sites. Thus, the HL7 FHIR® standards may play a key role in advancing beyond our current state.

Using the EDC system as the original recording of study data, i.e., the source, or to receive an electronic copy of the source are two special cases of interoperability likely to grow in the near future. Two early authors, Mitchel (2010, 2013, 2014) and Vogelson (2002), promoted use of EDC as eSource. Mitchel (2010, 2013, 2014) developed, implemented, and evaluated EDC as eSource.78,93,107 An early survey revealed that 22% of respondents were entering data directly into the EDC system.45 The survey likely overstated what would be accepted today as eSource use of an EDC system since it pre-dated public guidance108 by four years and regulatory guidance2 by a decade. Where data are not documented in routine care, such as in the case of commercial sites that do not provide care outside the context of clinical studies or studies that require data not collected or not documented in routine care, EDC eSource will likely have a secure niche. However, sites leveraging mainstream EHR systems to provide routine care to large patient populations may be better supported by extracting EHR data using FHIR® standards to prepopulate the eCRF. The literature emphasizes, however, that not all data are available through EHRs and that data availability will vary across sites109,110 meaning that those capable of EHR-to-eCRF interoperability will still require the ability to enter some data, source or otherwise, into the EDC system. For these reasons, sponsors and sites will likely be best served by EDC systems that support both electronic acquisition of available EHR eSource data as well as entry of the original data into EDC systems.

EDC Limitation 3

Finding only one instance of comprehensive, i.e., source-to-EDC, data accuracy assessment in the literature suggests that data accuracy was not a significant concern in initial EDC evaluations. Lack of routine measurement and reporting data accuracy in practice for data collected via EDC59 is a significant but correctable oversight. The error rate for source document verified data could be calculated with functionality and metadata in many EDC systems today. In the absence of a measured data entry error rate, and without leveraging opportunities to calculate an error rate from source document verification, EDC has decreased the knowledge about data accuracy available to trialists and regulators. Reasons behind not using EDC systems to assess data accuracy likely include (1) Methods for data accuracy measurement are poorly understood by those outside data management and statistics; (2) Data accuracy measurement from SDV processes aided by EDC systems would require documenting the fields for which SDV is performed and each data error identified; and (3) Monitoring and SDV procedures are usually outside the control of data management and statistical team members. Merely using available functionality to mark fields on which SDV was performed, using EDC system metadata to estimate a source-to-EDC error rate from SDV, and reporting the error rate would be a significant improvement in the rigor of data collected via EDC.

“There is a major difference between a process that is presumed through inaction to be error-free and one that monitors mistakes. The so-called error-free process will often fail to note mistakes when they occur.”111 Knowing the accuracy of data during a study makes intervention and prevention of future errors possible. Knowing the accuracy of data from a study is necessary to demonstrate that data are capable of supporting the study conclusions. Without such comparisons we do not know if EDC data are capable of supporting study conclusions. Lack of data accuracy assessment remains a major shortfall in our use of EDC technology today.

Lessons Learned from EDC Adoption

We have learned from the history of EDC adoption that non-technical factors significantly impeded adoption in the therapeutic development industry.48,77,80 For example, companies demurred from significant role re-definition and therefore missed significant re-engineering opportunity.80,101 In clinical informatics, it is commonly held that successful adoption of new information systems is “about 80% sociology, 10% medicine, and 10% technology.”112 Thus, to the extent that individuals, groups, and organizations will interact with new technology, needs assessment, design, development, dissemination, and implementation should equally account for human and sociotechnical factors and potential barriers.

The EDC adoption story demonstrates that “beneficial effects are obtained when the ways of thinking and working are changed to take advantage of the opportunities arising from having information online.”37 Web-based EDC offers the ability to centralize information while decentralizing its use, making information and information products (such as decision support and automation) available to everyone on the study team simultaneously and in real-time. This enables study teams to do things not possible, or at least unwieldy, prior to web-based EDC technology. As evidenced by the aforementioned limitations 1 and 2, implementing new technology without transformative process change to leverage these opportunities has not and will not yield the expected improvement.48,60,67,77,80,94,113

In addition to the process re-engineering needed to realize the benefits of new technology, infrastructure that comprise Quality Management Systems such as technical, managerial, and procedural controls need to be adjusted to the new technology. Roles and responsibilities have to be adjusted to the new working processes.60,67,77,80,94 Individuals in affected roles need training and time to adjust and gain experience with the new technology.60,67,77,94 The implemented processes and software need to be monitored in order to ensure expected performance in local contexts.77,80,74,75 This capacity building and infrastructure development is a project unto itself and should be managed as such, separately from evaluation pilots and stabilized before using new technology in routine operations.80 Even then, new technology faces Solow’s Paradox; i.e., that IT investments aren’t often or immediately evident as increased productivity.114 Hypothesized reasons why immediate benefit is not perceived with new technology include lack of accounting for work redistribution, lack of accounting for work to meet needs for increased explicitness needed for automation and decision support, and failure to leverage and integrate the new technology with pre-existing technology, infrastructure, and processes. Looking back on EDC adoption, we can see their mark. The difficulty prospectively conceptualizing and valuating opportunities made possible by new technology adds another reason why increased productivity and return on investment (ROI) often isn’t immediately evident. Such ROI projections should account for increasing value of information technology to an organization as more data, especially data traditionally managed in separate systems, become available for use.65,68 Data sharing from early pilots of new technology may help overcome the paradox through more accurate prediction as will methods that take into account likely causes of the IT productivity paradox.

In major re-engineering endeavors, large companies with existing and stable infrastructure (including roles, responsibilities, procedures, and technology in place) tend to have more inertia and are slower to change.17 On the other hand, companies without legacy infrastructure are usually more nimble in implementing beneficial change.17 Thus, large organizations need clear strategy, strong leadership, meticulous goal alignment, and thorough understanding of how new technology will mesh with pre-existing infrastructure in order to re-engineer at a pace similar to their smaller and newer competitors.25

In the authors’ experience many organizations piloted one or more EDC systems. As evidenced by this literature review, very few of these pilot projects published results, and even fewer published in peer-reviewed scientific literature. Practices regarding information sharing in therapeutic development have evolved over the last three decades and pre-competitive information sharing now occurs much more frequently than it did in the past. Examples include initiatives such as TransCelerate Biopharma (https://transceleratebiopharmainc.com/), the Clinical Trials Transformation Initiative (CTTI, https://www.ctti-clinicaltrials.org/), the Society for Clinical Data Management (SCDM) eSource Consortium (https://scdm.org/esource-implementation-consortium/), projects undertaken by members of European Federation of Pharmaceutical Industry Associations (EFPIA), and the VULCAN Accelerator in HL7 (http://www.hl7.org/vulcan). Through initiatives such as these, future technology evaluations are expected to be more collaboratively undertaken and published.

The history of EDC as told through the literature is that, with one exception,47 the EDC evaluations that were published leveraged observational methods and empirical data without the support of experimental controls. In many cases the research was done without contemporaneous comparators. This review found no record of ongoing productivity monitoring past initial evaluations. Further, variability in the outcome measures used in the reported studies precluded formal meta-analysis to synthesize evaluation results (Table 4). A general lack of sharing evaluation results and the heterogeneity of evaluation outcomes, along with the aforementioned barriers all likely contributed to the “perpetual piloting” phenomenon mentioned in the literature, and lengthened the EDC’s adoption curve. More rigorous evaluation on consistent outcome measures, along with earlier information sharing will likely benefit the industry in future new technology adoptions. Collaborative evaluation could further decrease uncertainty earlier in and shorten the adoption curve.

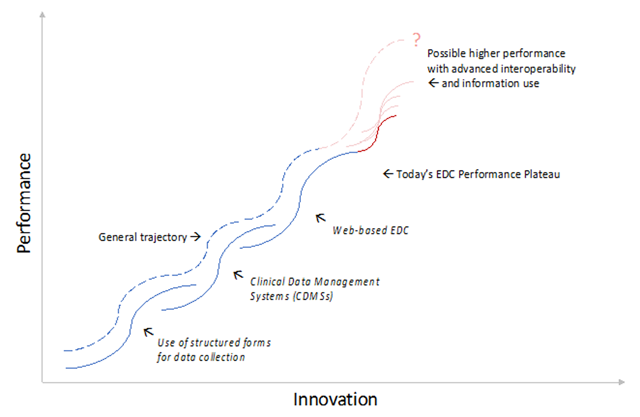

There have been three major paradigm shifts in clinical research data management: (1) the use of structured forms for data collection; (2) the advent of clinical data management systems in the late 1990s, in which data were entered, imported, integrated, stored, cleaned, coded, and otherwise processed; and (3) web-based EDC that decentralized data entry, cleaning, and use. While we argue that, though the potential of the latter is not yet fully realized, all three innovations have positively increased the ability to plan, conduct, and manage clinical studies, increased the types and number of studies that we can conduct, and improved the documentation, if not the quality of the study data. Three major limitations remain with EDC technology: (1) interoperability deficits, (2) unexploited process re-engineering potential, and (3) lack of error rate estimation. Significant untapped potential exists for the use of automation and immediate information availability to support, make, and act on study management decisions in real-time such as following-up on data and resolving discrepancies within a day of their commission and detecting and intervening in operational anomalies like protocol violations and non-compliance immediately. The potential gains are magnified when all sources of data on a clinical study are centrally available through interoperability to signal detection algorithms and for decision support.

Due to these limitations, in terms of diffusion of innovation,115,116 our current plateau on the innovation “S-curve” falls short of what could be achieved with current EDC technology (Figure 2).

Major Events on the Trajectory of Innovation and Performance Improvement in Data Collection, Management, and Use in Clinical Research.

Diagram adapted from: Clayton M. Christensen, “Exploring the limits of the Technology S-Curve. Part 1: Component Technologies”. Production and Operations Management 1, no. 4, (Fall 1992) 340.

Advances in processing and use of different types of data (beyond those obtained from CRFs) when integrated into EDC have the potential to further, and sustain, an upward performance trajectory in EDC effectiveness, adoption, and function. There are many technological advances on the horizon including new data sources, advanced ways to extract information from data such as image processing and natural language processing, more advanced ways to generate knowledge from information (such as data mining and machine learning), and advanced ways to apply new knowledge to augment human performance such as use of artificial intelligence for signal detection and decision support. Likely similar advances will positively impact clinical study design, conduct, oversight, and reporting. Parallel advances in data standards such as those currently pursued through the HL7 VULCAN accelerator for clinical research could exponentiate performance gains through agreement on, and availability of more precise data definition and mechanisms for data exchange. If fully pursued to support clinical research use cases, FHIR® standards will unlock data not previously available (or not previously computationally accessible) and will enable new uses of operational data such as computationally aligning EHR data to a study schedule of events, or enabling new opportunities to shorten therapeutic development such as seamless conversion of open-label extension studies to EHR- or claims-based post-market registries.

Many organizations will pilot and eventually adopt new data sources and novel ways of collecting, processing, and using data in clinical studies. To gain value, organizations will need to integrate them into existing processes or re-engineer existing processes to optimally exploit them. For example, use of artificial intelligence to detect operational anomalies is easy to implement separate from existing data collection and processing pipelines. However, with a recent and notable exception, none as of yet have integrated such data into study data processes or at-scale, routine use by clinical trial teams. The EDC adoption story indicates that each advance may experience a protracted entry to the adoption curve for similar reasons experienced by EDC and will likely have the characteristic 15–20 years117 to reach maturity and widespread adoption as experienced by many other recent technological advances (Figure 1). Each of these advances has the potential to sustain performance increases in clinical research moving us beyond today’s EDC (Figure 2). While only wild speculation, the next major paradigm change in data collection, management, and use in clinical studies may be comprehensive adaptation and adoption of the FHIR® standards in clinical research. Application of the FHIR® approach to enable seamless exchange of data within clinical site facilities, between clinical sites and study teams, and among organizations working together to conduct clinical studies would end the current siloed state.

Beyond EDC

What can we learn from the history of EDC adoption to help us move beyond today’s EDC, to derive greater benefit from new technology, and to do so faster?

In the beginning, EDC processes and technology were new to site investigators, site staff, sponsors, and regulators. EDC adoption had a pervasive impact across processes and roles at sites, sponsors, and regulators. The broad impact involved new technology, new processes, new tasks, new skills, and involvement of new roles and affected new ways of working for individuals, groups, and organizations. Examples include the following:

EDC changed the data collection and submission workflow at clinical sites.

EDC shifted work to, and required new competencies of investigators and site staff, such as entering study data and reporting software problems.

EDC forced site staff to fundamentally change their thought processes used in data collection; for example, paper forms were often used as cognitive aids encoding CRF completion instructions and in some cases serving as worksheets and check lists supporting systematic and complete data collection. EDC challenged but did not completely overturn this practice.

EDC changed the data review and auditing process for sponsors and regulators.

EDC shifted new work to, and some existing work away from data management.

EDC added steps to site start-up, trial monitoring, and study management such as a) obtaining access to and training on EDC software, b) using EDC software to document SDV, and c) needing to have edit checks and workflow ready prior to the start of enrollment.

The automated alerts and dynamic form behavior available in today’s EDC systems requires Data Managers to be skilled at workflow analysis and process design.

EDC requires the involvement of new roles (and people) at trial sites such as the addition of information technology support staff.

Using computer systems to provide automation such as generating additional pages and forms and communicating data discrepancies directly and immediately to sites required increased explicitness, detail, and precision in study specifications. For example, query wording had to be written so that it did not require manual editing or customization because sites saw the queries immediately. In general, increasing the level of automation increases the explicitness required to program computers to do operations previously handled by humans. In this way computer systems in increasing the explicitness required (and often not previously undertaken) are perceived as increasing rather than decreasing work, i.e., the IT Paradox.

It is not evident from the reviewed literature that the breadth and depth of these fundamental shifts were expected or clearly articulated at the onset of EDC. Similarly, it is also not evident from the reviewed literature that the new possibilities offered by the increased information content and availability offered by EDC were recognized by, clearly (i.e., mechanistically) articulated by, or exploited by early adopters. These are now better articulated in the Good Clinical Data Management EDC chapters. Additionally, the increased explicitness spurred by EDC has offered new possibilities, such as automated generation of forms only used under special conditions (e.g., an early withdraw form), and automated detection of protocol violations, alerts and other events. Other benefits derived from increased protocol specificity, and EDC use in general, include gaining tracking information as a by-product of work tasks and the ability of geographically distributed teams to simultaneously access, review, and respond to data and alerts on data when entered. Though speculative, the lack of broad awareness of these new opportunities and the cost or added value of each, contributed to delay in EDC adoption.

Many emerging advances apply narrowly to one type of data or another. For example, natural language processing generates or extracts structured information from free text. Similarly, artificial intelligence operates over existing data and extends the possible uses of the data through automation or decision support. Though these certainly offer new possibilities for data use, they are focused on narrow use cases. In contrast; a new universe of opportunities may be opened by comprehensive and easily implementable data definition and exchange standards that bridge existing and previously computationally impermeable boundaries such as those between trial sites, healthcare facilities, sponsors, central labs, core labs, and central reading centers participating in study conduct, and those offering new sources of data such as healthcare claims data or data from medical devices. The data standards that would allow this, albeit slow in coming and with much investment remaining, offer new opportunities for gaining value from data use, like aqueducts through a nation of information deserts.

One area of information exchange already opened by the HL7 FHIR® standards is the direct extraction of data from Electronic Health Records (EHRs) and transmission of the data on an ongoing basis to a study EDC system. Though individuals and organizations have pursued direct use of EHR data in longitudinal studies for decades, early demonstrations were limited by use in single EDC systems, single EHRs, and single-site studies, use of older or no standards, and were largely conducted outside the context of an ongoing clinical trial.118 Different from most other study data sources, direct EHR-to-EDC data collection shares many of the fundamental shifts seen with EDC in that roles, processes, information flow, and needed skills are impacted for sites, sponsors, and regulators alike at the level of individuals, groups, and organizations. Like EDC, direct data collection from EHRs offers possibilities not available today such as decreasing data collection burden on sites, detecting and correcting data quality problems at the source, extending an unbroken chain of traceability back to the exact data value in the source, extending safety surveillance for years into the future, and assessing generalizability of study results. Before these and other opportunities not yet conceived can be pursued, we must climb the adoption curve. The cost and time pressures in therapeutic development today will likely not withstand another two- to four-decade wait. The similarities between EDC and direct EHR data collection are quite striking. Thus, the lessons learned from EDC implementation, adoption and scale-up offer knowledge and guidance toward a more direct and streamlined testing, evolution, adoption, implementation, and optimization of the EHR as an important data source in the development, testing, and monitoring of new therapeutics.

Limitations

Though extensive attempts were made to identify all seminal events and relevant evaluations in the history of EDC, few were published in peer-reviewed literature. Many pertinent and important articles and papers may have been missed because they were published in outlets not indexed or preserved for academic retrieval. This review completely misses the likely substantial work undertaken and communicated only within organizations. Additionally, one individual extracted information for this historical review. Although the articulation was independently reviewed by the two co-authors, the initial extraction is subject to human error and bias associated with first author’s single reviewer synthesis. Commentary on the article is encouraged to counter any bias or missed information relevant to this review. In this vein, materials used in this review will be made available for re-interpretation and analysis.

Conclusions

In this comprehensive review of the EDC literature, the large number of articles identified through references rather than through indexed literature search is striking. This likely indicates that organizational leaders and practitioners pursued EDC pilots and adoption in absence of the extant knowledge at the time – or based on anecdote. This likely slowed EDC adoption. The synthesis of EDC benefits and barriers presented here may inform future evaluation of technology for use in clinical studies. Literature synthesis, as is currently being done in the Good Clinical Data Management Practices (GCDMP) and pre-competitive information sharing common today should benefit those employing new technology or methods in the design, conduct, and reporting of clinical studies.

Based on the study designs employed in the reviewed EDC evaluation articles, very few EDC evaluations were conducted with rigorous designs capable of supporting causal inference that EDC technology directly brought about positive change in quality, cost, or time metrics. Disparate metrics reported in the EDC literature further impede progress by precluding comparisons and quantitative synthesis. We do conclude, however, that the published evidence supports the finding that EDC facilitates faster acquisition of data with fewer discrepancies. The evidence also indicates that significant room exists for decreasing data collection cycle-times, and that this can be achieved via as-soon-as-first-possible data entry (or transfer) of data and review of that data. The evidence does not support claims that overall data accuracy is improved. The dearth of measurement and reporting of source-to-EDC data accuracy is quite surprising, especially when calculation of such can be directly supported by EDC technology today. This constitutes a significant oversight by organizations conducting clinical studies. The most important question with respect to use of any data, and especially that used in regulatory decision-making, is whether the data are of sufficient quality to support intended decisions. This has not been proven in a generalizable way for EDC. We surmise that this lapse is fueled by the faulty perception that errors in the source cannot be detected or corrected or by the mistaken belief that translation of source data into EDC is seamless and highly accurate. Lack of EDC data quality assessment, in-particular accuracy measurement in clinical studies, should be immediately remediated.

The review identified multiple future directions for moving beyond today’s EDC, including: (1) Providing easy, real time, and seamless acquisition and integration of clinical data; (2) Providing easy, real time, and seamless interoperability with other operational information systems used in clinical studies; (3) Measuring the accuracy of study data, (4) Supporting study conduct and management through automation and decision support, and (5) Re-thinking and moving existing boundaries in therapeutic development through real-time exchange of computationally accessible data. Potential examples of the latter include clinical verification of direct-to-consumer data and broader early use of therapeutics enabled by direct EHR and claims data acquisition and surveillance. In most other industries new or faster availability of information has opened up entirely new opportunity. We are only beginning to use information technology such as EDC and available data such as routine care and claims data to benefit development of new therapeutics and protect the health of the public that uses them.

Appendix: Description of Quantitative Evaluations of EDC

Evaluation 1

Banik and Mochow, presented in 1998.